This was originally posted as an article in the Customer Strategist Journal.

To read the latest issue click here.

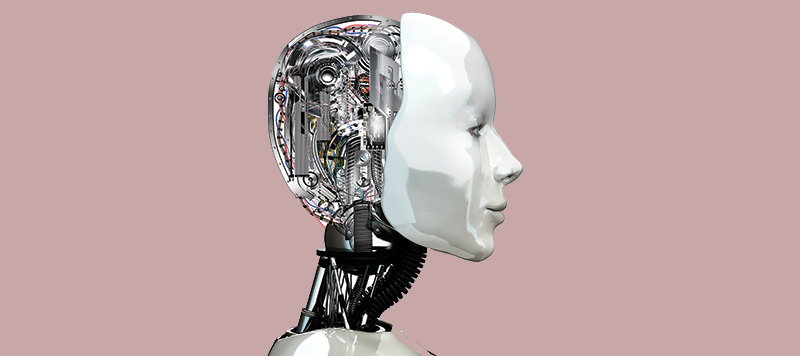

There’s so much data out there, it’s hard to wrap your head around it. Luckily you don’t have to. Technology is available to do the work for us with massive data sets, often in the blink of an eye. And more importantly, computers can learn while doing so.

With the advent of Big Data and the interest in deriving signals and insights from it, machine learning has emerged as a valuable technique, and in some cases an attractive alternative, to more traditional analytic techniques. The idea behind machine learning is to teach computers to learn from data, continuously, and get smarter as they perform. It’s defined as the study and construction of algorithms that can learn from and make predictions on data.

Machine learning has been around for a while, and it’s being applied in some very interesting areas. In the consumer arena, Facebook automatic image tagging is based on a machine learning algorithm that learns from the photos you manually tag to identify you and your friends in future pictures. And self-driving cars use image processing and algorithms to learn where there’s a stop sign in the road or if a car is approaching, based on what the cameras around the car see.

The growth in machine learning is accelerating. The market is predicted to reach $15.3 billion by 2019, with an average annual growth rate of 19.7 percent, according to BCC Research. Machine learning’s growth is due in large part to three important factors:

• The dramatic increase in the availability of different types of data

• The reduction in computing power needed to run the algorithms

• The growth in inexpensive massively parallel processing architectures

The realisation by businesses that they can continuously leverage the vast amounts of data they have and act on those insights has also led to the growth and interest in machine learning techniques.

Machine learning can be applied in almost any industry and has a few key distinctive benefits: It self learns, gets better over time as it gets exposed to new data, and it can be applied in real time. For example, IBM’s Watson got really good at playing chess and won the game show Jeopardy. It is now being applied in situations like replacing contact centre representatives to handle straightforward queries, or playing the role of personal care physician for a routine checkup. Machine learning, however, is not just for the straightforward and the routine. It can also handle very complex tasks, such as self driving or learning Japanese, as Watson recently did. These more complex activities are made possible via deep learning and multilayered neural networks.

Machine learning is now beginning to move into the marketing space, so companies can get smarter about their customers. With it, companies can be more nimble as they improve customer experiences across the board; serving up relevant content and offers, and/or real-time responses during customer interactions.

Imagine if you could design an algorithm that leverages all of the profiles, actions, behaviours, and information about your customers across all channels to deliver the next best action. This would be a machine learning algorithm that would also continuously learn from the customer data being generated and get smarter over time.

Transparency Market Research estimates that predictive analytics software will be a big early growth category for machine learning applications. It’s expected to reach $6.5 billion worldwide in 2019, up from $2 billion in 2012. There is a lot of potential insight for marketers to work with.

How machine learning differs from traditional analytics

Before jumping in with investments around machine learning, it’s important to know that machine learning is fundamentally different from traditional econometric analytic approaches. There are a few key ideas that set it apart from other ways of using analytics:

Use all the data when feasible, not a sample. Traditional analytics relies on gathering insight from representative samples, rather than complete data sets, due to time, size, and computing power limitations. With the decrease in storage prices and increase in computing power, the ability to work with all of the data available to us has significantly increased. This way more information can be extracted, more quickly, even for small population samples. This means that marketers can comfortably analyse target segments that are small without having to worry about whether they will be well represented in samples.

It is ok to work with messy, unclean data. Traditional analytics relies heavily on creating a very clean data set before developing analytical models. This is partly driven by the scarcity of information contained in many databases. When there is a lot of data available, it is actually better to deal with a little messiness (e.g., words spelled differently ways in a document) and work with large volumes of data versus curating small amounts of clean data. Consider Google Translate—underlying it is a machine learning algorithm that leverages all of the Internet’s data, which can be quite messy and unclean. Prior to this effort, most language translation projects had worked with smaller amounts of curated data but had not been as successful at accurately automating translation. Of course, having completely clean data is always preferable.

Algorithmic details may be hard to decipher, leading users to cede some control. Certain machine learning algorithms create their own feature sets that correlate most with the KPI of interest. Though they may be highly predictive, these feature sets may be unknown and have no real business meaning. For example, a movie may have features like genre, lead actor, and language. A machine learning algorithm on its own may extract a new feature that is a complex combination of the three features above and this complex combination may not have any direct business relevance other than the fact that it is a good predictor. This point is also closely linked to the next one around correlation versus causation.

Correlations are ok, not everything needs to be causal. Machine learning focuses more on making predictions with less interest on understanding the process by which the data being predicted is generated. Given that, one is likely to find patterns that are more correlations than causations. For example, Google flu trends correlated millions of search terms with the actual outbreak of the flu to determine which terms were predictive. This enabled a real-time view of where the flu was likely to happen and gave an early warning signal that could be complementary of other more accurate sources of information, such as the Centres for Disease Control and Prevention.

Algorithms are self-learning and fine-tune themselves. In traditional analytics, human intervention is required to refit or re-estimate models. But self-learning algorithms operate differently. Given the right set of data, these algorithms can learn from their own mistakes and mutate themselves to be more predictive over time. They can also adjust to an underlying change of conditions, as long as that is reflected in the data. Of course the change and error correction is not instantaneous, and can take a few cycles of data. Key to this process is continuously feeding in data that corresponds to the outcome KPIs.

Remember, machine learning is not a panacea. Yes, it has its advantages, but it’s not perfect. Noisy data or misread algorithms can cause inaccuracies, However, machine learning has real promise as the way to approach analytics for the future. It can solve difficult problems that are not solvable by other means. It provides the insights and potential for taking advantage of real-time information and updating corporate beliefs quickly, which can provide a competitive advantage for those who adopt it. Although machine learning is still a long way away from nirvana, it has its place and is definitely worth adopting for greater gains.